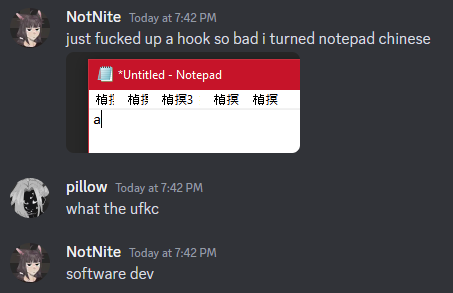

why computer glitches sometimes spit out nonsensical Chinese

have you ever stumbled upon a glitch like this?

it doesn't take a genius to figure out that something is going wrong here. but why?

when computers are storing text, they store that text as sequences of numbers. for some ungodly reason1, some computer systems don't use UTF-8 internally, and instead choose to use UTF-16.

UTF-16 encodes each character as two bytes2. this means that the exact order in which those bytes are stored matters a lot more than in some other encodings. to illustrate the problem, here's some sample text, and the corresponding bytes that represent it as UTF-16:

| S | a | m | p | l | e | ␣ | t | e | x | t | . | ||||||||||||

| 00 | 53 | 00 | 61 | 00 | 6D | 00 | 70 | 00 | 6C | 00 | 65 | 00 | 20 | 00 | 74 | 00 | 65 | 00 | 78 | 00 | 74 | 00 | 2E |

now, what happens if we interpret the byte boundaries in the wrong spots?

| � | 匀 | 愀 | 洀 | 瀀 | 氀 | 攀 | 琀 | 攀 | 砀 | 琀 | . | ||||||||||||

| 00 | 53 | 00 | 61 | 00 | 6D | 00 | 70 | 00 | 6C | 00 | 65 | 00 | 20 | 00 | 74 | 00 | 65 | 00 | 78 | 00 | 74 | 00 | 2E |

as you can see, we've gone from perfectly normal text to something unintelligible at best and invalid at the worst, without actually changing the underlying bytes one bit.

this sort of error can be caused in a couple of different ways; usually it's either by reading the bytes backwards3 or out of sync due to a program error, or having an odd number of bytes get lost in transmission somewhere on a network. usually, most of the text can be restored manually, although you may end up losing a couple characters in the latter case.

1. UTF-16 used to make some amount of sense back when there was less than 216 characters in Unicode.

2. unless you're using anything outside the Basic Multilingual Plane, like Emoji.

3. the ordering of bytes is called "endianness" by nerds.